Developer productivity metrics are not performance scores. They are system signals.

Yet most teams still treat them as evaluation tools instead of diagnostic tools, and that’s where things go wrong.

If you’ve ever heard:

You’re not alone.

We are here to clarify what developer productivity metrics actually measure, which ones signal flow and quality, and how to use them safely, without distorting behavior or damaging trust.

If you want a full implementation framework, read our main guide on measuring developer productivity. In this article we will be focusing specifically on the metrics themselves, what they signal and what they don’t.

Developers distrust metrics for one core reason:They’ve seen metrics used for control instead of improvement.

When teams track:

…turns knowledge work into a factory output model. It doesn't fit and engineers know it doesn't fit, which is why they adapt.

Not to be dishonest, but because the system is measuring the wrong thing and they're rational actors responding to it.

Software engineering is a socio-technical system. Measuring it like manufacturing creates defensive behavior, metric gaming, and loss of psychological safety.

The DORA research program, which has studied engineering performance across thousands of teams since 2014, found that high-performing teams are distinguished not by raw output metrics but by delivery flow, stability, and the conditions that make sustainable work possible. Individual activity tracking doesn't appear in any high-performance cluster they've identified.

There’s a critical distinction:

Developer productivity metrics should measure system dynamics, not evaluate people. When metrics become performance scores, they stop being truthful.

Developer productivity is not output volume.

It is the ability to translate intent into working, reliable software with minimal friction and sustainable cognitive load.

It exists at three levels:

Can this developer focus, solve the right problem, and ship correct solutions without unnecessary friction from tooling, process, or context switching?

Can the team collaborate, review, integrate, and deliver together without losing work to handoff delays, unclear ownership, or coordination overhead?

Does the entire engineering workflow efficiently convert effort into customer value or is significant capacity being consumed by rework, blocked queues, and compounding dependencies?

Most developer efficiency metrics fail because they focus on the first level while productivity actually emerges at the third. Outputs don’t equal outcomes.

A developer who writes fewer lines of better code may improve system performance more than someone shipping constant activity.

Microsoft Research's SPACE framework developed through analysis of engineering teams at Microsoft and published in the ACM Queue makes this structural.

The explicit intent of the framework is to prevent single-metric approaches, which consistently distort what they're trying to measure.

GitHub's internal research into their own engineering teams found that optimizing for individual commit frequency had no meaningful relationship to their most important delivery outcomes. What did correlate: PR cycle time and deployment stability. The signal wasn't in individual activity but a system flow.

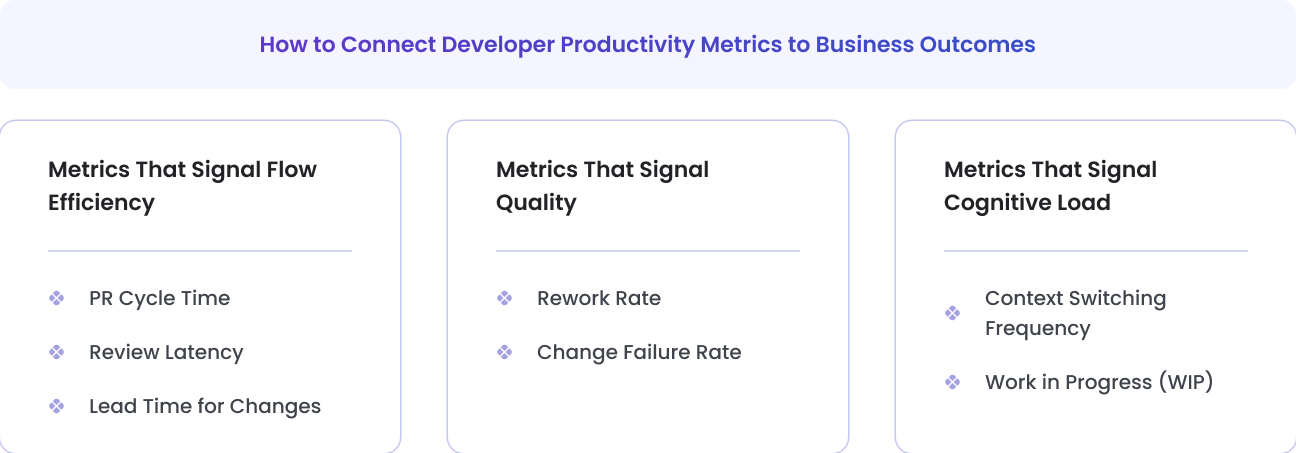

Not all metrics measure the same thing. High-quality measurement requires separating signal types.

High-signal developer productivity metrics span flow, quality, and cognitive sustainability , not just speed.

Below are metrics that provide meaningful system insight.

Each includes:

Signal:

How long it takes for work to move from creation to merge.

Reveals:

Cannot tell you:

Becomes dangerous when:

Teams optimize for faster merges instead of better reviews.

Signal:

Time before first review feedback.

Reveals:

Cannot tell you:

Becomes dangerous when:

Developers rush superficial reviews to reduce latency numbers.

Signal:

Time from first commit to production.

Reveals:

Cannot tell you:

Becomes dangerous when:

Teams split work artificially to shrink numbers.

Signal:

How much work is being redone shortly after completion.

Reveals:

Cannot tell you:

Becomes dangerous when:

Teams avoid refactoring to “protect” the metric.

Signal:

Percentage of deployments causing incidents.

Reveals:

Cannot tell you:

Becomes dangerous when:

Teams hide incidents to preserve numbers.

Signal:

How often developers shift tasks.

Reveals:

Cannot tell you:

Becomes dangerous when:

Leaders misinterpret switching as inefficiency instead of overload.

Signal:

Active tasks per developer or team.

Reveals:

Cannot tell you:

Becomes dangerous when:

Teams redefine “in progress” to reduce visible WIP.

Some developer productivity metrics distort behavior more than they clarify it.

Encourages verbosity. Penalizes cleanup.

Encourages artificial commit splitting.

Destroys trust. Encourages busyness over value.

Inflates over time. Not comparable across teams.

If a metric rewards activity instead of outcome, it will eventually degrade quality.

Developer productivity metrics should support teams , not surveil them.

Metrics are conversation starters.

Instead of:

“Why is your PR time high?”

Ask:

“What’s blocking flow in this stage?”

The difference is cultural, and it determines whether metrics build trust or destroy it.

Developer productivity metrics matter only if they influence business performance.

Developer efficiency metrics are not business metrics, but they predict them.

When flow improves and rework declines, delivery becomes more predictable. When cognitive load stabilizes, innovation increases.

Implication: When a CTO presents engineering metrics to a board, the conversation shouldn't be about lead time in isolation. It should be "our lead time has improved 30% over six months, which means we're delivering customer value faster, running smaller and safer releases, and reducing the operational cost of each deployment." The metric is the mechanism; the business outcome is the point.

Developer productivity metrics are not about measuring how hard engineers work.

They are about understanding:

When used correctly, they reveal system friction. When used incorrectly, they create it.

Measure to understand. Interpret to improve. Never to control.

Developer productivity metrics are quantitative signals that indicate how efficiently engineering work flows through a system, how stable the output is, and how sustainably teams operate.

They measure system health, not individual performance.

High-signal metrics include:

Lead Time for Changes

PR Cycle Time

Rework Rate

Change Failure Rate

Work in Progress

The right set balances speed, quality, and sustainability.

Avoid:

Lines of code

Commits per day

Time tracking for utilization

Raw story point comparisons

These metrics often create unintended incentives

Junior developers may show longer PR cycles due to learning curves.

Senior developers may show lower output volume due to mentoring and architectural work.

System-level metrics reduce unfair individual comparisons.

They are useful flow indicators, but only when paired with quality signals.

Fast merges with rising defect rates indicate instability.

Use:

Team-level aggregation

Trend analysis

Cross-metric correlation

Anonymous satisfaction feedback

Focus on system improvement.

Weekly: anomaly detection

Monthly: trend analysis

Quarterly: strategic alignment

Metrics should guide improvement cadence, not daily pressure.

Uncover hidden productivity bottlenecks in your development workflow