AI has impacted software development in a major way. We are witnessing a systemic shift across the entire SDLC. It’s no longer about accelerating coding speed, but fundamentally transforming how code is generated, reviewed, validated, and delivered.

Consequently, this transition redefines our metrics for success across five key areas: productivity, quality, stability, workflow economics, and team dynamics.

Recently, the AI giant behind Claude published an engineering post detailing the ongoing shift in how software is built.

Anthropic ran an experiment where multiple AI agents worked as a team to build a C Compiler. The system ran for 2 weeks, during which it built a 100,000-line compiler capable of building the Linux kernel across thousands of sessions.

That was neither an autocomplete nor developers prompting to build a function for them.

That was a completely autonomous, multi-agent system. The project consumed billions of tokens and about $20,000 in compute. However, if humans were doing that job, it would have taken a full engineering team and a much longer timeline.

For just the time being, let’s forget how Anthropic built it and just try to understand what it actually reveals about the impact of AI on software development.

And this is just a preview of how AI will take the software development world by storm.

However, the irony is that the majority of engineering teams around the globe comprehend ‘AI impact’ in a partially wrong way.

We have cracked coding faster using AI, but we haven’t fully understood what it’s doing to the development system underneath. And we end up measuring AI impact on software engineering in the wrong way.

There are useful signals. But they miss the bigger change.

It is worth adding this: AI does not just make developers more productive; it changes the behaviour of the entire delivery system and uproots the old economics of change and the cost of failure. Here is how…

When AI enters your SDLC:

In a trade-off like this, when you measure AI impact barely by seeing vanity metrics - like lines of code per day, PR merge rate, or deployment count - you would never be able to measure its true impact.

The real AI impact lies much beneath the vanity metrics and can only be measured by considering…

So the real impact of generative AI on software development is not speed. It’s how AI reshapes the balance between change, risk, and stability.

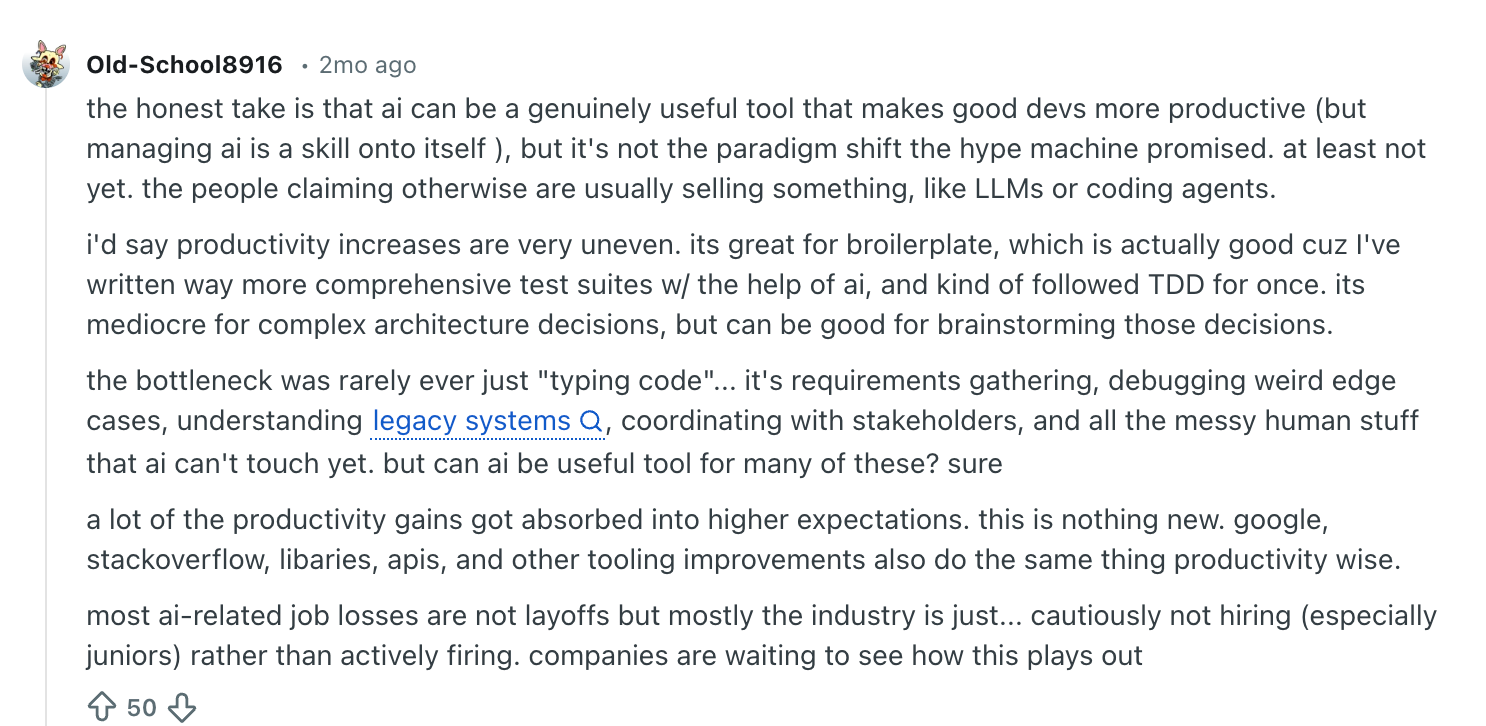

In one of the Reddit Threads, it has been widely argued that the AI impact is not a linear process to measure and understand. In reality, it is much more complex. One outstanding question one Redditor raised, “If AI is making teams 5–10× faster, where is the explosion of new software? Where are the massive waves of small teams shipping complex products?”

In most of the cases, AI impact can be visible at different levels.

At this stage, AI looks like a productivity tool. But system-level metrics may not change yet.

This is where the productivity paradox surfaces. Developers are faster, but the system is not.

At this stage, AI becomes part of the engineering operating model.

However, the impact of generative AI on software development is still not clear, because if leaders only measure Stage 1, they will see faster developers, more PRs, and higher activity. The real impact appears in Stage 2 and Stage 3, and then reconnecting it back to Stage 1.

AI is often associated with code generation. But its real impact is spread across the entire lifecycle of software delivery.

AI is changing…

Thus, let’s understand the impact of AI on the software development lifecycle by looking at each stage individually.

This reflects the real shift caused by AI…

- Code becomes easier to generate

- System bottlenecks move to reviews, testing, and stability

So the impact of AI on the software development lifecycle must be measured by correlating AI-written code with real delivery outcomes such as…

AI adoption among developers has grown faster than any other tool in recent years.

As per JetBrains’ 2025 survey, 85% of developers regularly use AI tools in their workflows. Moreover, a controlled research study found that developers using GitHub Copilot completed the task 55.8% faster than those without it (published in this research paper).

However, when it comes to productivity metrics, 66% of developers are not confident that the metrics used to measure their productivity are truly representative.

So, let’s just touch upon this topic beyond merely discussing productivity metrics.

At the individual level, AI clearly changes how developers work.

Rather than writing everything from scratch, developers can now…

This new approach of software engineering powered by AI shifts developers’ roles from pure code author to code generator, validator, reviewer, and integrator. They are now more responsible for delivery and business outcomes than ever.

However, the developer productivity paradox becomes more real. At one end, they achieve faster time to first commit and more pull requests per developer. But at the other end, smaller and more frequent changes consume most of their time, and code churn rate skyrockets.

AI reduces certain types of cognitive load. Or rather, developers offload their thinking to AI. But it comes with a trade-off situation, again.

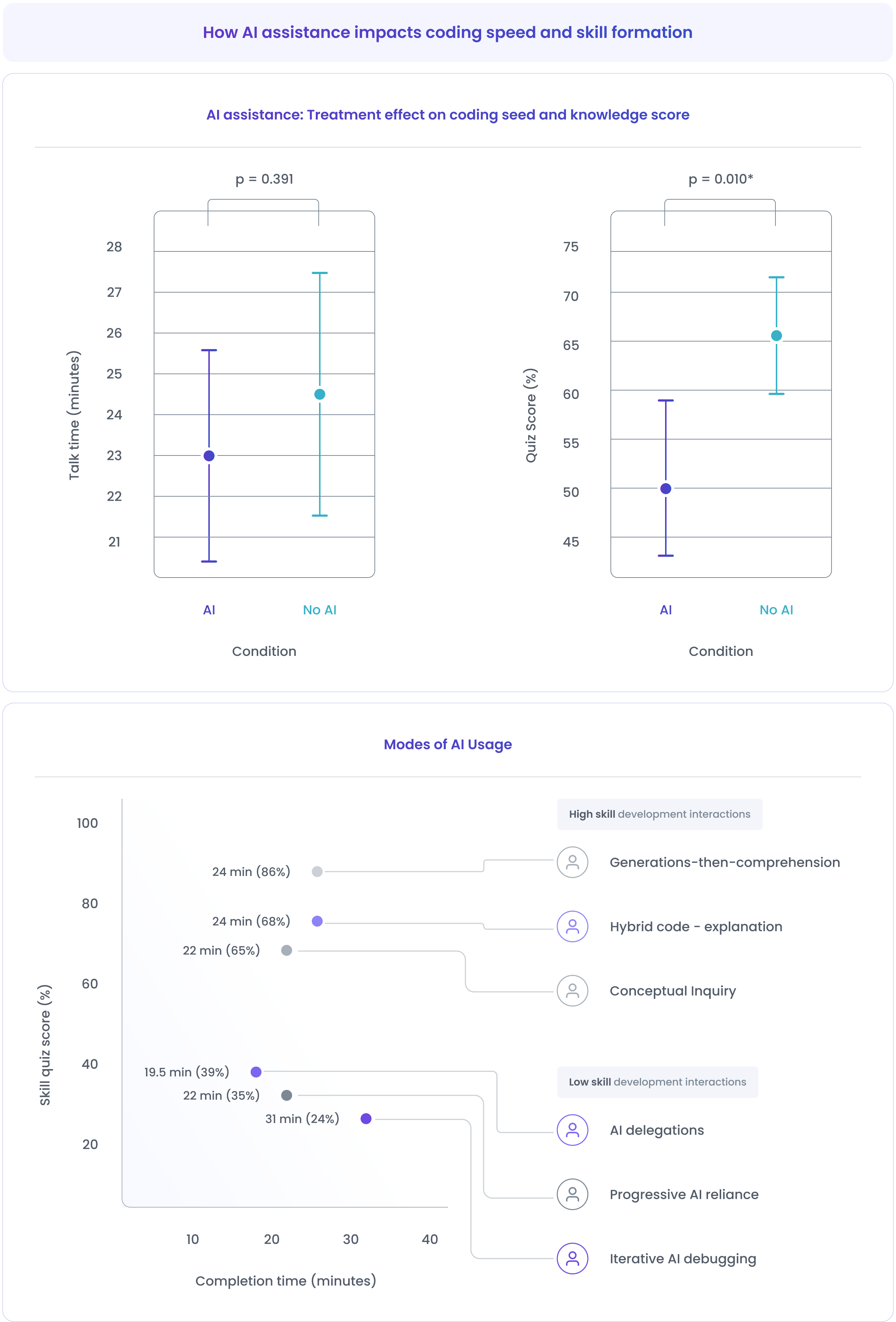

Recent research from Anthropic highlights this negative impact of AI on software developers clearly. Study found that developers who used AI assistance scored 17% lower on a skills quiz compared to those who coded without AI.

As per that study, the major affected skill zones are debugging and code comprehension.

Debugging is not just fixing errors.

It involves…

Before AI, developers built debugging skills naturally because they wrote most of the code by themselves.

But now with AI, large parts of the code are AI-generated, so developers simply accept without understanding the full logic.

This creates a new pattern. When something breaks, developers are not sure about what to look for and where to start. They find it difficult to reverse-engineer AI-generated code and understand unfamiliar logic.

This way, while AI reduces the effort of writing code, it increases the difficulty of debugging.

Similarly, code comprehension is another crucial skill, but it is now degrading due to AI. It is the ability to read code quickly, understand its intent, predict its behavior, and modify it safely.

AI changes the learning loop. Developers rely on AI explanations instead of reasoning through the code. Over time, this leads to a shallower understanding of core concepts and difficulty tracing complex logic.

And this state of unawareness is very risky in large systems, performance-critical components, distributed architectures, and security-sensitive code.

One of the biggest promises of AI in software development is that it improves developer flow. And it is correct too, but at a certain level.

AI helps developers stay in the zone without context switching by…

But the reality becomes more complex and unpredictable in large or unfamiliar systems.

AI-generated code often…

The underlying impact of these changes the very state of developer flow. Developers now experience…

And in the worst case, it triggers micro-context switches…

So, the essence is, in simple tasks, AI reduces context switching. In complex systems, it can increase it.

For decades, organizations have tied developer productivity to the lines of code they write in a given time. The most common but ineffective ways to measure developer productivity are…

In AI-SDLC, these metrics become misleading.

Leveraging AI, developers can generate large volumes of code instantly, inflate PR counts, and increase commit activity. However, these numbers only show activity and not outcomes.

These numbers do not capture the associated effect of AI-generated codes, such as rework, review bottleneck, change failure rate, tech debt, etc.

Example:

A team that previously shipped 40 pull requests per week now ships 90 PRs per week. On paper, productivity looks healthy.

But a closer look shows…

Thus, in AI-SDLC, the key question is no longer: How much code did developers produce?

It becomes: How much reliable, maintainable, and valuable change reached production?

Needless to say, AI improves some aspects of code quality and maintainability, but it also introduces new risks at the system level. Here is the detailed faster output vs long-term maintainability scenario.

The core pattern is similar across all areas. AI improves local code quality but increases system-wide complexity. In other words, it just shifts bottlenecks from coding and shifts the constraints to review, testing, and system stability.

AI does not accelerate just one step. It increases the volume, velocity, and frequency of changes moving through the entire system. This creates a second-order effect across…

For more than a decade, the DORA reports have defined how elite engineering teams measure results and prepare themselves for the next big AI moment.

Their research consistently depicts to us that elite engineering teams aren’t just faster, they're faster and stable - at the same time.

The four core DORA metrics remain the foundation:

However, DORA’s 2025 State of AI-Assisted Software Development report adds a new dimension to this model: how AI affects these outcomes.

One of the key findings is that AI acts as an amplifier, not a fix. It amplifies whatever the strengths and weaknesses you have in your engineering pipeline.

The report also shows a clear pattern…

Another key insight from the report is that real results from AI do not come from AI tooling but from improvements in workflows and organizational systems, with regard to AI-driven development.

Last year, Hivel CEO, Sudheer Bandaru, hosted DORA report author, Benjamin Good, in a webinar where Benjamin reinforced the crucial idea - AI does not automatically create high-performing teams. It accelerates whatever system already exists.

Thus, leaders should shift their focus from AI tooling to the underlying delivery system in order to increase speed without sacrificing stability and turn AI-driven activity into real, measurable engineering outcomes.

And that underlying delivery system with its associated AI impact is best understood through the DORA lens:

AI can improve the first two. But without strong engineering practices, it often degrades the last two.

That is why the impact of generative AI on software development is not just about speed, but meeting stability with speed.

AI changes code. But more importantly, it changes people and structures.

Every major shift in software history reshaped not just tools, but…

AI is doing exactly the same. And in several ways, the cultural change caused by AI is deeper than its technical change.

Because now the real differentiator is not code generation but…

For decades, coding speed and implementation skill were core signals for assessing the ability of any developer. AI weakens that signal.

Because when large parts of the code can be generated using AI, the bottleneck shifts to areas where AI still struggles, such as…

With that, the role of developers changes…

From:

Someone who produces code

To:

This leads to a crucial shift in perceiving engineers in many organizations: The most valuable and talented engineers are not just those who code faster, but they are the ones who understand the system as a whole and know how using AI in their daily tasks could snowball to business outcomes in both good and bad ways.

At first sight, it seems like AI flattens the experience curve.

Junior devs can now…

But the deeper system effect is different.

Junior devs tend to use AI for quick wins. They focus on getting code to compile, passing tests, and delivering functional output fast. This creates a very dangerous loop.

Over time, these quick wins encourage less critical thinking, more reliance on AI output, and reduced focus on edge cases and long-term design decisions.

Whereas senior devs use AI completely differently. They use AI for cognitive offloading. They let AI handle routine tasks while they focus on higher-level decisions.

For example, senior devs use AI to…

But their judgment remains the primary decision authority. They treat AI as a secondary assistant and not the source of truth.

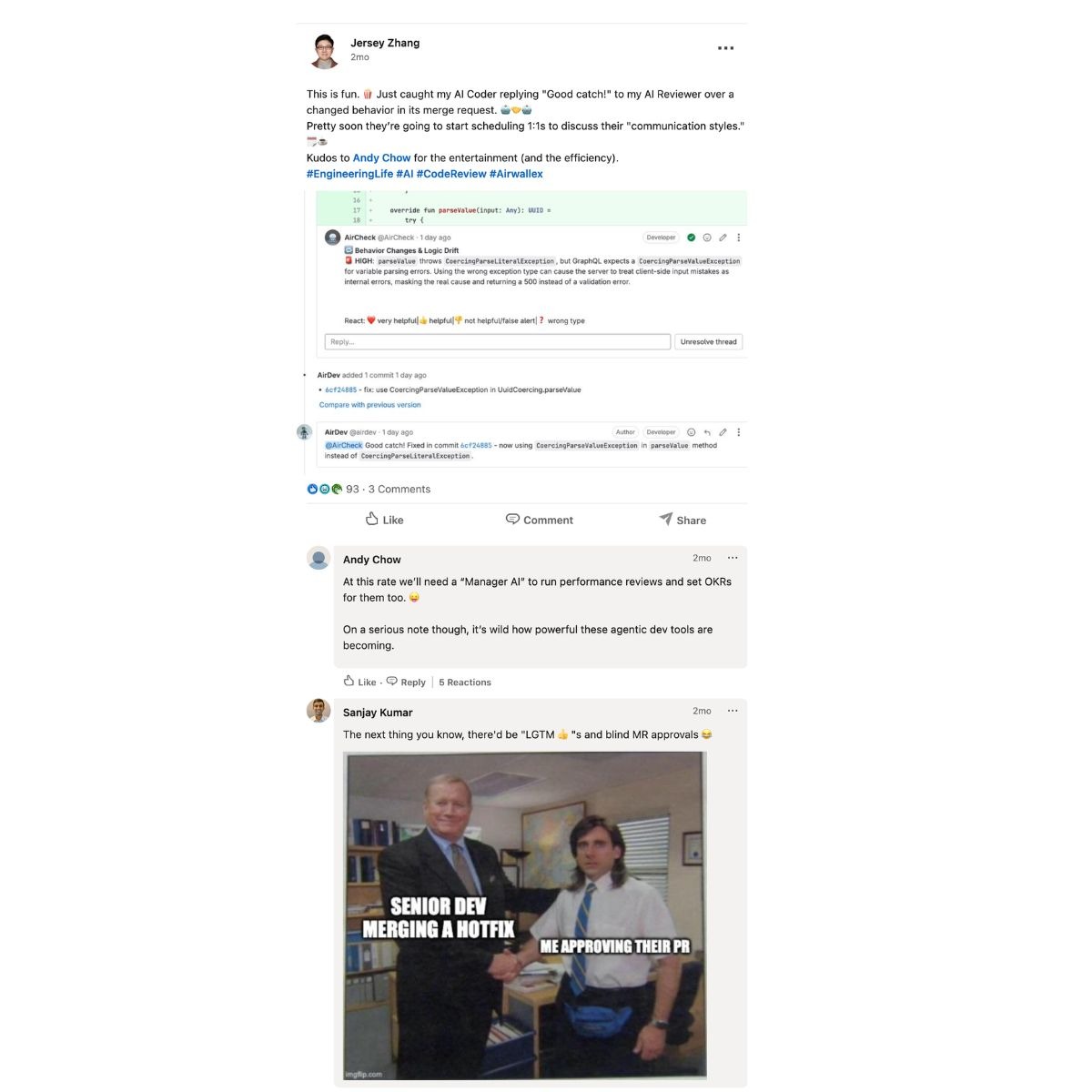

In this Reddit thread, one senior dev pointed out the same problem of junior devs copy-pasting AI suggestions without understanding the logic.

The ripple effect of this is more devastating than what that Reddit thread points out. Senior devs reviewing AI-generated code of junior devs end up spending more time reviewing shallow code, fixing edge cases, and stabilizing systems. They could invest that time in architecture, system improvements, and long-term decisions.

In this blog, we tried to peek inside the brains of junior devs who code with AI and senior devs who review it - to understand their different cognitive models.

Usage of AI creates a new balance that engineering teams must manage.

If teams place too much trust in AI…

And if they place too little trust…

Here, the healthy engineering approach is treating AI as an assistant, not as an authority.

The following is the Who Owns What framework, which can help you decide AI and humans boundaries.

Traditionally, engineers built skills through…

AI shortens that process. Developers can now…

This gives short-term learning speed, but it creates a risk of shallow learning.

Over time, developers may…

The goal is not to limit AI, but to shape how it is used.

To tackle this, engineering leaders should encourage…

AI does not create the same outcome for every type of organization. The same AI tool that helps startups to attain velocity can introduce risk, instability, and governance challenges in a large enterprise.

Key takeaway:

AI mostly accelerates coding at a small scale and changes the risk profile, coordination costs, and economics of the entire delivery system at a large scale.

Most AI conversations in engineering start with productivity. But productivity alone does not equal value.

A team can…

And still end up with…

So, the real challenge for the engineering team is not adopting AI, but measuring the impact of AI in software development.

Productivity metrics usually measure activity such as…

These metrics are already weak alternatives to value. And in AI-assisted SDLC, it becomes even less reliable.

AI can inflate code volume and increase PR counts. But none of these guarantees…

A team might double its activities and still…

So, the real question arises - Did AI increase valuable, reliable change, or just activity?

This question defines whether AI is creating true engineering ROI.

To understand the real AI impact, teams must move from activity metrics to outcome metrics.

Some of the most useful system-level indicators include…

However, it’s worth mentioning that true AI impact can only be revealed if you correlate related metrics and see it as a whole. For example…

PR volume + Review time = Review bottleneck signal

Deployment frequency + Change failure rate = Speed vs stability trade-off

Coding time + Rework rate = Low-quality code generation

Not all metrics behave the same way. Some metrics show problems early, whereas some metrics reveal problems once they reach production.

A few examples of leading metrics or indicators are…

In most cases, these metrics change soon after AI adoption.

Lagging indicators are…

By the time these metrics get worse and dip further, the system has already absorbed the impact of AI in software engineering.

DORA metrics are valuable because they…

But in an AI-assisted environment, DORA metrics alone are not enough. They are lagging indicators. They tell you whether your delivery system improved or degraded.

But they did not tell you - why it happened, where the bottleneck is, and how AI is affecting the workflow.

For example:

This is where additional workflow-level metrics become crucial. Because leaders need visibility into review cycles, rework rates, and code churn, as these signals explain how AI is changing the system.

.jpg)

It is now widely evident that AI is changing the economic, operational, and engineering structure of software development.

In traditional development systems…

AI reverses this. The following are the top risks introduced by AI into 3 major categories.

Strategic takeaway:

Before AI, the main cost was writing code. After AI, the main costs shift to:

By this point, one pattern should be clear - AI does not change coding speed only; it changes economies, bottlenecks, and risk profiles.

So, AI adoption cannot be treated as…

It must be treated as a system-level transformation.

The following framework helps leaders approach AI adoption in a disciplined way.

1) Principles for responsible AI adoption

2) Where to experiment vs standardize

Experiment at the edges. Standardize at the core. For example…

3) Align AI use with business outcomes

Leaders should connect AI adoption to business outcomes, such as…

The end goal is:

If you want to see how these AI-driven shifts are affecting your engineering system in real time and measure their impact with the right metrics, it’s time to have a clear visibility into how AI affects delivery speed, code quality, stability, and team dynamics across the entire SDLC and start measuring the system itself. Explore how Hivel can help you understand, manage, and optimize AI-driven development across your organization.

No. AI does not replace developers. It just changes their role. Instead of spending most of their time on writing code, developers can now focus more on system design, code review, integration, stability & reliability. One should understand the boundary between AI and humans, that AI handles repetitive tasks, but humans make architectural decisions, validate logic, and own production outcomes.

At the individual level, AI surely improves coding speed and reduces repetitive efforts. Many studies suggest faster task completion and higher pull request activity with AI tools. However, system-level productivity depends on what happens after code is written. AI might increase rework, review bottlenecks, and tech debt, and this impacts overall delivery. This depicts the clear productivity paradox introduced by AI.

Developer performance evaluates how well individual developers or teams execute their responsibilities (quality of work, collaboration, growth).

Productivity is about the system. Performance is about the people. Both matter, but require different measurement approaches.

AI can both improve and harm reliability. On the one hand, AI can generate tests, reduce system errors, and suggest fixes quickly, but on the other hand, it can introduce logic issues, generate inconsistent patterns, and increase change volume beyond system capacity. So, reliability depends less on the AI tool itself and more on review practices, testing systems, platform guardrails, and workflow design with clear ownership.

Teams should move beyond activity metrics such as lines of code, PR counts, and deployment frequency. Instead, they should track outcome metrics like lead time for changes, change failure rate, rework vs new work ratio, review cycle time, and deployment stability.

Yes, AI can be safe for enterprise software development. However, one should enforce proper control, such as security policies, data exposure risks, intellectual property protection, compliance requirements, and tool standardization. Most large organizations adopt AI through approved toolchains, platform-level guardrails, strict review processes, and secure model environments.

Uncover hidden productivity bottlenecks in your development workflow

We'll show you exactly how AI is impacting your speed and code quality.